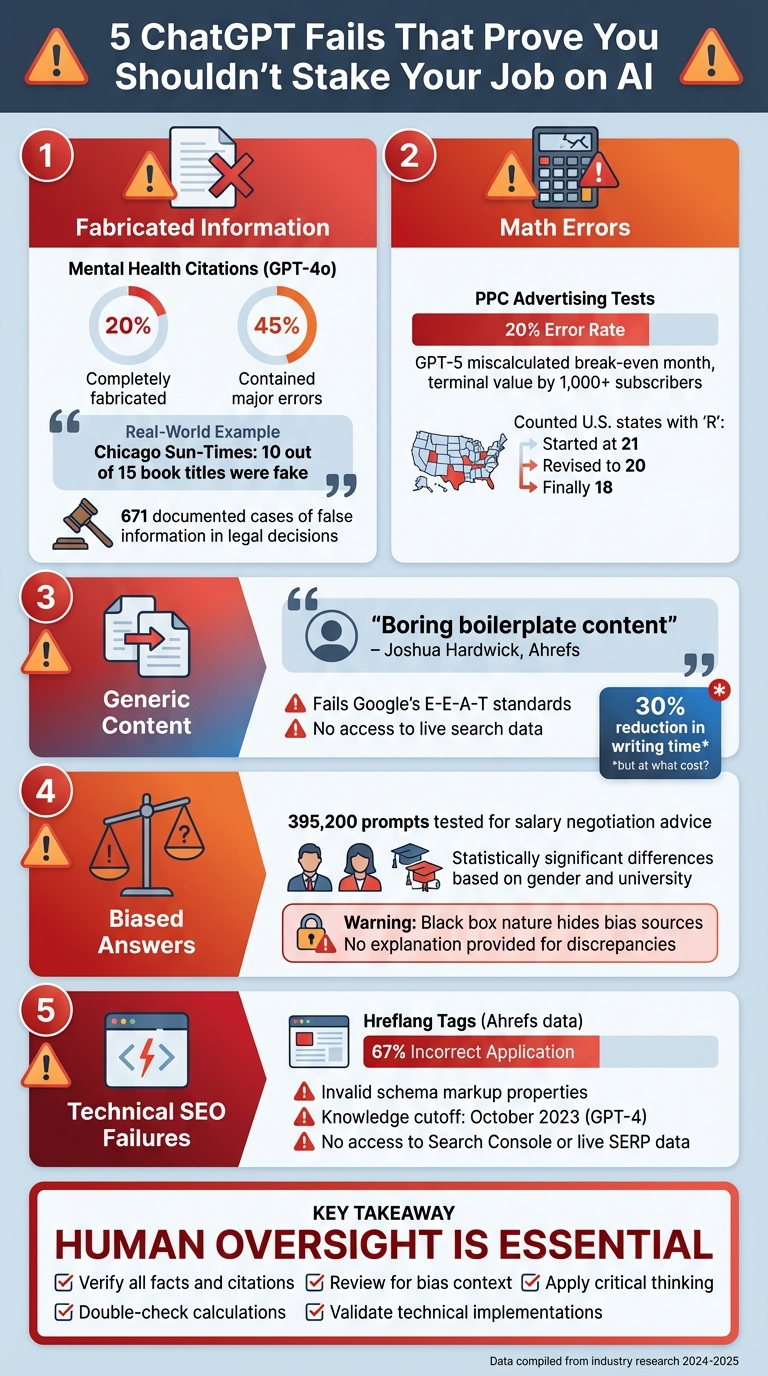

ChatGPT fails are expected. It’s a helpful tool so we accept the fact that it’s far from perfect. It often makes critical mistakes, especially in high-stakes tasks like SEO, legal work, or technical projects. Here are five major reasons why relying solely on ChatGPT at work could backfire:

- Fabricated Information: ChatGPT can confidently provide fake citations, incorrect facts, or harmful advice (e.g., recommending toxic substances as “healthy”).

- Math Errors: It struggles with even basic calculations, leading to costly mistakes in financial or data-driven tasks.

- Generic Content: Outputs often lack depth, originality, and fail to meet SEO standards like Google’s E-E-A-T guidelines.

- Bias: The AI reflects societal biases in its training data, delivering skewed or unfair advice without explanation.

- Technical Limitations: It mishandles technical SEO tasks like schema markup and doesn’t account for recent updates or live data.

While ChatGPT can speed up simple tasks, human oversight is essential to catch errors, ensure accuracy, and provide critical thinking. Think of it as a tool – not a replacement for expertise.

5 Critical ChatGPT Failures That Risk Your Job Security

ChatGPT Fails?! Why AI Can Be WRONG! 🤖⚠️

The Risks of Relying on ChatGPT at Work

Using ChatGPT for work might seem like a time-saver, but it comes with risks that can harm your reputation, credibility, and even your job security – especially in SEO. Let’s dive into some real-world examples that highlight these dangers.

In March 2025, the Chicago Sun-Times published an AI-generated “Summer reading list for 2025.” Out of the 15 book titles listed, 10 were completely made up [5]. Such glaring errors not only erode trust with the audience but also shake the confidence of stakeholders.

The legal profession has faced even graver consequences. In 2024, an attorney was fined $10,000 for submitting an appeal that included 21 fabricated case citations. Mistakes like that can be career-ending [5]. In fact, there are at least 671 documented cases where generative AI introduced false information into legal decisions [5].

Data security poses another major concern. Any sensitive information you input into ChatGPT could be stored on third-party servers and potentially used to train future AI models [7][5]. Unless you take proactive steps – like going to Settings > Data Controls and disabling the “Improve the model for everyone” option – your confidential business details could be at risk. This opens the door to compliance headaches and potential data leaks [5].

And then there’s the issue of inefficiency. While ChatGPT may seem like a shortcut, the constant need to double-check its output can waste significant time. As Tiernan Ray from ZDNET aptly put it:

“If you’re not on your toes, you’ll miss an incorrect assertion that might later throw everything off” [4].

At that point, you might wonder if the time spent correcting errors is worth it. You could have just done the work yourself from the beginning.

1. Making Up Facts and Fake Sources

ChatGPT generates text based on patterns it has learned, not by verifying facts. This limitation often leads to confidently presented information that sounds convincing but is completely incorrect [8][6].

For example, tests revealed that nearly 20% of GPT-4o’s citations in mental health literature reviews were entirely fabricated. Even among the valid citations, over 45% contained major errors [5]. This is more than just a minor flaw – it’s a serious issue when accuracy matters most.

Take one alarming case: A 60-year-old man asked ChatGPT for a healthy salt substitute. The tool suggested sodium bromide, which led to bromide poisoning. This is a stark reminder of the risks tied to fabricated advice [5].

Even OpenAI’s CEO, Sam Altman, has acknowledged this flaw. In his own words:

“I probably trust the answers that come out of ChatGPT the least of anybody on Earth” [8].

If the creator of the tool is skeptical of its reliability, that speaks volumes. The problem becomes even worse in longer conversations, where ChatGPT’s consistency falters.

During extended interactions, the tool’s context window can “forget” earlier parts of the discussion, leading to shifting details and contradictions [4]. Imagine starting a project with one set of facts, only to have the tool unexpectedly change those facts midway through. This unpredictability adds another layer of concern.

2. Getting Basic Math Wrong

ChatGPT doesn’t actually calculate numbers – it predicts them based on patterns it has learned. This approach can lead to answers that sound convincing but are completely wrong. These numerical errors underline a bigger issue: AI’s tendency to miscalculate.

Take basic arithmetic, for example. Tiernan Ray tested GPT-5 while working on a newsletter business plan and found multiple inconsistencies. The AI claimed the break-even month was 11, but a table it generated showed month 10. It also miscalculated a terminal value by over 1,000 subscribers and botched discount rates. Frustrated, Ray described the experience:

“You know, half the time I spent was spent correcting things that ChatGPT shouldn’t have done in the first place” [4].

This isn’t limited to complex calculations. Even simple tasks can trip up the system. In one test, Matt Novak asked GPT-5 to count U.S. states with the letter “R.” It started with 21, revised it to 20, and finally landed on 18 after multiple corrections [9].

Such errors can be costly, especially in fields like SEO. A 20% error rate in pay-per-click advertising tests [5] could skew keyword volumes, misallocate budgets, and throw off ROI projections – all of which could lead to losses in the thousands of dollars.

3. Creating Generic Content Without Strategy

ChatGPT works by mimicking patterns it has observed, which often results in content that lacks originality and depth. As Joshua Hardwick, Head of Content at Ahrefs, points out:

“ChatGPT will happily put one word in front of the other if you ask it to write something, but the content will just be a mishmash of what’s already been said a million times. In other words, boring boilerplate content.” [2]

This approach leads to predictable and uninspired material that search engines are unlikely to reward. The AI’s method of predicting the next word is based on statistical likelihood, not a genuine understanding of the topic. Sarah Shugars, Assistant Professor of Communication at Rutgers University, explains:

“A lot of its output is very bland; missing the surprising connection and elevation of new ideas that can occur in human writing.” [3]

Because ChatGPT lacks real-world experience and the ability to synthesize ideas into unique insights, its output often remains shallow. This makes it difficult for the AI to meet Google’s E-E-A-T standards, which prioritize expertise, experience, authority, and trustworthiness.

Another limitation is its inability to access live search data. This means it struggles to identify content gaps or accurately interpret search intent [2]. Even when it avoids outright errors, the results often follow predictable templates. Clara Burke, Associate Teaching Professor at Carnegie Mellon University, highlights this issue:

“ChatGPT’s version of a rousing call to action is: ‘The time for change is now.’ It clearly asks for change but doesn’t offer audiences a new way of imagining change… so it’s unlikely to actually effect change.” [3]

This formulaic style leads to generic content that fails to engage readers or address the nuanced strategies required for strong SEO performance.

While AI tools can save marketers time – reports suggest a 30% reduction in writing time with AI assistance [10] – relying solely on AI-generated content comes at a cost. Without human input to provide unique insights and industry expertise, the content risks falling flat in both rankings and conversions. It’s a clear reminder that while AI can assist, human oversight remains essential for creating impactful and strategic content.

sbb-itb-16c0a3c

4. Giving Biased Answers Without Explanation

One of the more concerning issues with ChatGPT is its tendency to produce biased responses without clarifying where those biases come from. Since the model is trained on massive datasets sourced from the internet, it inevitably reflects the societal, political, and cultural biases embedded in that data. As Udo Sglavo, Vice President of Advanced Analytics at SAS, aptly explains:

“The results of generative AI are, at their core, a reflection of us.” [3]

The problem lies in ChatGPT’s “black box” nature – it generates predictions based on patterns but doesn’t explain the reasoning behind its answers. This lack of transparency makes it hard to detect when bias has influenced the output. Compounding the issue is automation bias, where users tend to trust AI-generated content simply because it appears polished and authoritative.

A study conducted by researchers R. Stuart Geiger, Fiona O’Sullivan, and their team in June 2024 brought this issue into sharp focus. They tested 395,200 prompts across four ChatGPT versions, asking for salary negotiation advice. The only variables they altered were the candidate’s gender and university. The findings, published in February 2025, revealed statistically significant differences in the recommended starting salaries based solely on these demographic factors – and the AI never provided any rationale for the discrepancies [12]. Identical candidates received different advice, with no explanation as to why.

In the context of SEO, biased outputs can damage a brand’s credibility and fail to align with Google’s E-E-A-T standards (Experience, Expertise, Authoritativeness, Trustworthiness). This highlights the critical need for human oversight in SEO content creation. The polished appearance of AI-generated text often discourages users from questioning its accuracy or fairness. As noted in a Bloomberg Technology + Equality report:

“There’s also a risk that the veneer of objectivity that comes with technology tools could make people less willing to acknowledge the problem of biased outputs.” [11]

If you’re relying on ChatGPT for professional content, always have a human expert review the results. Use chain-of-thought prompting to encourage step-by-step reasoning, and experiment with different demographic identifiers in prompts to identify potential biases. Without these precautions, you risk publishing content that carries hidden and harmful biases.

5. Missing Technical SEO Requirements

Precision in SEO matters, and technical gaps in ChatGPT’s capabilities can amplify its risks. Unlike a human expert, ChatGPT doesn’t understand search engine logic. It generates responses by predicting the next word, not by comprehending how search algorithms or server-side systems work. This makes it prone to errors when tackling technical SEO tasks.

Take schema markup as an example. While ChatGPT can produce JSON-LD code snippets, the output often contains invalid properties or broken syntax. These errors can lead to indexing issues because the AI isn’t capable of verifying compliance with Schema.org standards. Fabrice Canel, Principal Product Manager at Bing, emphasized the importance of schema markup:

“Microsoft’s LLMs do use schema markup to understand web content” [14].

Even if the code seems fine on the surface, without proper validation, it can fail in practice.

The problem grows when tasks require real-time data. ChatGPT doesn’t have live access to tools like server logs, Google Search Console, or SERPs. For instance, Jayakumar Muthusamy from TripleDart pointed out:

“ChatGPT can’t access search volume data, making it incomplete for commercial validation” [13].

This limitation could lead to wasted marketing resources on keywords that don’t deliver results.

Another critical issue is ChatGPT’s static knowledge cutoff, set to October 2023 for GPT-4. It won’t account for recent algorithm updates or guideline shifts. For example, Google reduced the visibility of FAQ and HowTo schema in 2023, but ChatGPT might still suggest implementing them. Additionally, hreflang tags are frequently misused – Ahrefs data shows 67% of websites apply them incorrectly [13]. These errors, if replicated, can disrupt URL structures and harm SEO performance.

If you’re leveraging ChatGPT for technical SEO, treat it as a starting point rather than a final solution. Always validate code using tools like Schema.org Validator or Google’s Rich Results Test. For keyword research, cross-check its suggestions with reliable SEO platforms such as Ahrefs or Semrush. As Udo Sglavo from SAS wisely noted:

“Users must continue to apply critical thinking whenever interacting with conversational AI and avoid automation bias – the belief that a technical system is more likely to be accurate and true than a human” [3].

Why Human Expertise Still Matters in SEO

The shortcomings of AI tools like ChatGPT highlight an undeniable truth: human SEO professionals bring strategic thinking and creativity that AI simply can’t replicate. While AI excels at analyzing patterns in existing data, it falls short when it comes to the nuanced decision-making that transforms cookie-cutter tactics into strategies that truly deliver results.

Recent tests have shown that AI-generated outputs can have considerable error rates [5]. When precision is non-negotiable, such inaccuracies are a major liability. This gap emphasizes the value of human insight in synthesizing and refining ideas.

AI also struggles with creative integration – the ability to weave together diverse concepts in unexpected ways that captivate audiences. Clara Burke, Associate Teaching Professor at Carnegie Mellon University’s Tepper School of Business, puts it perfectly:

“A good communicator will create memorable stories out of mundane prompts. That’s what we all need to do in the workplace to create a report, status update, or proposal compelling to our audience” [3].

AI’s lack of originality often makes it inadequate for crafting content that truly connects with and converts readers.

This shortfall becomes particularly risky in local SEO, where adhering to platform-specific guidelines is critical. Miriam Ellis, a Local SEO expert at Moz, warns that relying on ChatGPT for local SEO can lead to misinformation and even account suspensions [1]. AI frequently suggests tactics that violate policies on platforms like Google Business Profile or Yelp – errors that can result in permanent account bans and damage to a business’s reputation.

Another key advantage of human expertise is contextual awareness and risk evaluation – something AI cannot sustain. In long-term projects, AI has a tendency to “forget” important assumptions or previously established facts, requiring constant human intervention [4]. Sarah Shugars, Assistant Professor of Communication at Rutgers University, sums it up well:

“Algorithms are like golden retrievers – they will try really hard to do what you ask, even if that means chasing after an invisible ball” [3].

Without human oversight to guide and validate AI-generated results, even the most promising outputs can fall apart. These limitations make it clear: while AI can provide data, it’s human expertise that turns that data into actionable and effective SEO strategies.

Conclusion

ChatGPT can be a helpful tool for tasks like drafting outlines and title tags, but relying on it without oversight can lead to serious risks – potentially even jeopardizing your job.

As discussed earlier, one of AI’s biggest flaws is its inability to verify facts, which often results in unreliable outputs. For example, it fabricated nearly 20% of mental health citations and answered basic pay-per-click advertising questions incorrectly 20% of the time [5]. It also miscalculates, invents sources, generates generic content, and can introduce bias. According to the Ethics and Information Technology Journal, ChatGPT shows a noticeable disregard for the accuracy of its outputs [15]. These issues highlight fundamental limitations in how the technology functions.

Because of these shortcomings, AI should not replace human expertise. Instead, it works best as a tool to streamline routine tasks or provide initial drafts. However, human judgment is crucial to verify facts, double-check calculations, and ensure the content aligns with your goals and brand voice. Udo Sglavo, Vice President of Advanced Analytics at SAS, perfectly captures this necessity:

“Users must continue to apply critical thinking… and avoid automation bias” [3].

AI often lacks the context and precision needed for high-stakes work, making human oversight indispensable. As seen in earlier SEO examples, your expertise, creativity, and ability to grasp nuance are irreplaceable – especially in areas like SEO and content marketing, where your professional reputation is on the line. Think of AI as a helpful assistant, not the decision-maker. By combining its efficiency with your strategic insight and fact-checking, you can take advantage of its benefits without risking the integrity of your work.

FAQs

How can I make sure the information from ChatGPT is accurate?

To maintain accuracy, it’s crucial to verify any information provided by ChatGPT. Cross-check facts and references with reliable primary sources instead of depending solely on its output, especially for critical tasks like calculations or research. Always double-check data, citations, and conclusions to confirm their correctness and reliability.

What are the potential risks of using ChatGPT for technical SEO tasks?

While ChatGPT can assist with tasks like drafting title tags, writing meta descriptions, or brainstorming content ideas, depending on it for technical SEO comes with some notable challenges. For instance, the AI often exceeds character or word limits, which means manual edits are needed for elements like title tags or schema markup. It also has difficulty with basic calculations and logical reasoning, which can lead to inaccuracies in keyword difficulty scores, traffic projections, or crawl-budget planning.

One of the biggest issues is its tendency to generate incorrect or fabricated data, such as fake URLs, inaccurate citations, or misleading performance metrics. This can result in publishing schema markup with errors, outdated redirects, or flawed canonical tags. These mistakes could hurt your site’s performance or even lead to penalties. On top of that, ChatGPT lacks strategic thinking, so it often relies on rehashed ideas instead of offering fresh approaches to site architecture or technical optimization.

Another concern is the risk of outdated or biased recommendations without proper sourcing. This increases the chance of implementing practices that no longer comply with Google’s guidelines. Since technical SEO demands precision and accuracy, it’s essential to carefully review and verify any output before putting it into action.

How can I spot and reduce bias in AI-generated content?

To spot bias in AI-generated content, start by examining the text for any stereotypes or assumptions related to gender, race, age, or socioeconomic status. Cross-check the output with multiple trustworthy sources to see if it repeatedly reflects a single viewpoint – this could signal bias in the training data. Also, assess whether the content offers a balanced perspective and ensure that all claims are supported by reliable, verifiable facts.

Reducing bias involves strategies like prompt engineering, where you ask the AI to provide balanced or diverse perspectives (e.g., “Share viewpoints from various demographics”). If the response still skews in one direction, consider editing it manually or requesting a rewrite with a more neutral tone. For sensitive or critical content, it’s wise to involve human reviewers or use specialized tools to detect bias. Always treat the AI as a starting point rather than the final voice, and enrich its output with insights from diverse sources or experts to achieve a more inclusive and accurate result.